"Our integration with the Google Nest smart thermostats through Aidoo Pro represents an unprecedented leap forward for our industry."

- Antonio Mediato, founder and CEO of Airzone.

AI is everywhere these days, helping us make decisions faster and smarter. But sometimes it feels like these AI systems are a bit of a mystery—we get the results, but not the “why” behind them. That’s where explainable AI, or XAI, comes in. Positioned within the broader landscape of ai & ml development, it’s all about making AI’s decisions clear and understandable, so we can actually trust the technology we’re relying on. In this blog, we’ll chat about what XAI really means, why it matters, and where it’s headed next.

When we talk about machine learning, most people imagine these super-smart systems making predictions or decisions with incredible accuracy. But here’s the catch, many traditional machine learning models are what you might call “black boxes.” They take in data, crunch the numbers, and spit out results, but exactly how they arrive at those results is often a mystery.

This lack of transparency happens for a few reasons. First, some of the most powerful models, like deep neural networks or ensemble methods, involve incredibly complex mathematical operations and layers upon layers of computations. These models might have thousands or even millions of parameters interacting in ways that are nearly impossible for humans to interpret directly. It’s like asking someone to explain exactly how their brain formed a specific thought. We know it happens, but the step-by-step reasoning isn’t clear.

Second, traditional models often focus heavily on performance metrics such as accuracy or precision. The goal has been to get the best results possible, sometimes at the expense of interpretability. In fields like image recognition or speech processing, this might be fine because the outcome is clear-cut. But when machine learning is applied to critical decisions, think credit approvals, medical diagnoses, or legal judgments, understanding why a model made a decision becomes vital.

Another reason transparency is tough to achieve is because of the sheer volume and complexity of the data these models use. When models are trained on high-dimensional data sets with hundreds or thousands of features, figuring out which factors influenced a prediction is no simple task. It can feel like trying to untangle a giant ball of yarn that’s been knotted up.

Finally, the way these traditional models are built and deployed sometimes contributes to the problem. Many algorithms were created in academic or research settings with a focus on pushing performance boundaries rather than explainability. This means that when these models are brought into real-world applications, businesses and end users are often left scratching their heads.

All of this adds up to a real challenge: how do you trust a system when you do not fully understand how it works? Without transparency, it’s difficult to detect errors, biases, or unfair practices baked into the model. It also makes it harder to meet regulatory requirements and gain user trust.

This is exactly why explainable AI is gaining so much attention. It aims to open up that black box, giving us the tools to understand, trust, and improve machine learning models in ways that traditional approaches just can’t.

"Our integration with the Google Nest smart thermostats through Aidoo Pro represents an unprecedented leap forward for our industry."

- Antonio Mediato, founder and CEO of Airzone.

Explainable AI, or XAI for short, is all about making machine learning models understandable to humans. Instead of treating AI like a mysterious black box that gives you answers without explanation, XAI focuses on shining a light inside that box. It helps us see how and why a model made a certain decision or prediction.

But why does that even matter? After all, if a model is accurate, shouldn’t that be enough? The truth is, accuracy alone doesn’t tell the full story. Imagine if a doctor gave you a diagnosis but refused to explain their reasoning. You’d probably want to know the why behind it before trusting the advice, right? The same goes for AI. When decisions impact people’s lives, whether it’s approving a loan, diagnosing an illness, or screening job candidates, we need transparency and trust.

Explainable AI gives us that transparency by providing insights into the model’s inner workings. It can show which factors influenced a decision the most or highlight patterns the model picked up on. Sometimes it even explains things in a way that’s easy for non-experts to understand, like saying, “This loan was denied mainly because of your credit history and income level.”

Another important reason XAI matters is accountability. As AI becomes a bigger part of industries like finance, healthcare, and law enforcement, there’s growing pressure from regulators, consumers, and companies themselves to ensure AI isn’t biased or unfair. Explainability helps detect when a model might be favoring one group over another or making mistakes due to flawed data.

On top of that, XAI supports continuous improvement. When developers understand how their models make decisions, they can identify weaknesses or areas where the model might be overfitting or misinterpreting data. That leads to better models and more reliable AI over time.

In short, explainable AI bridges the gap between powerful, complex technology and the people who rely on it. It builds trust, supports fairness, and makes AI more responsible. As machine learning continues to shape our world, XAI will be essential for making sure those changes happen in ways we can understand and feel confident about.

"By analyzing the data from our connected lights, devices and systems, our goal is to create additional value for our customers through data-enabled services that unlock new capabilities and experiences."

- Harsh Chitale, leader of Philips Lighting’s Professional Business.

Move Beyond the Black Box

Adopt explainable AI solutions that meet regulatory standards, reduce bias, and build user trust.

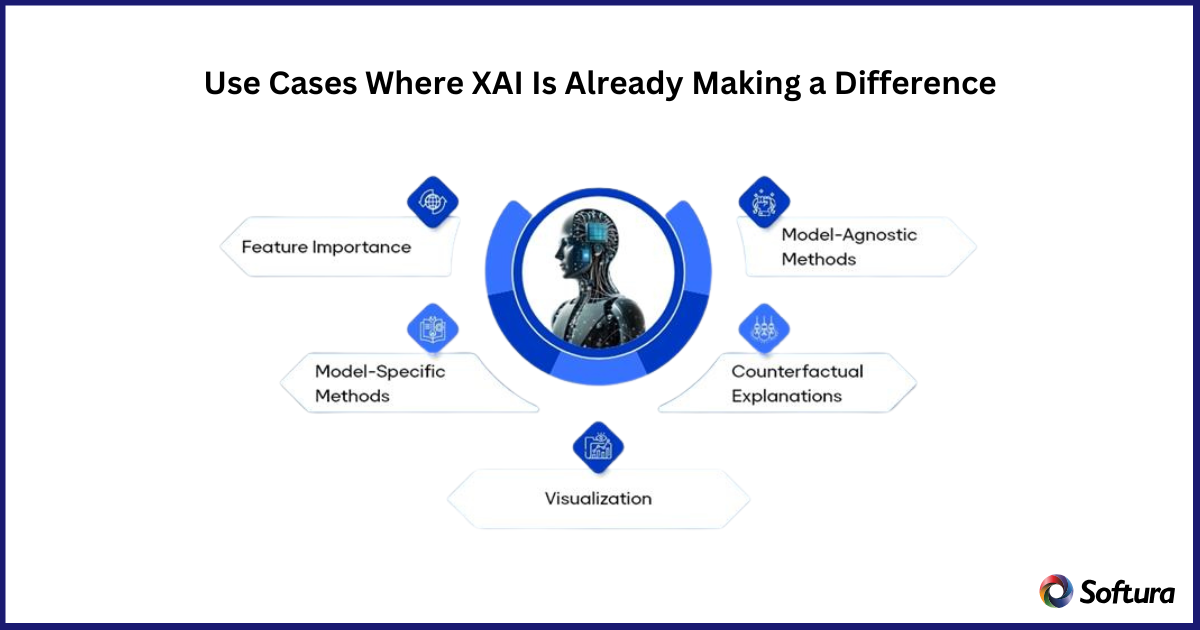

Explainable AI is not just a concept for the future, it’s already making a real impact across a variety of industries and applications. When AI decisions affect people’s lives, businesses and organizations are turning to XAI to bring transparency and trust into the equation.

One of the most obvious areas where XAI shines is in healthcare. Imagine an AI system that helps doctors diagnose diseases or recommend treatments. With explainability, doctors can see why the AI suggested a particular diagnosis. This is crucial for making sure the recommendation makes sense and for explaining it to patients. It also helps medical teams catch errors or biases before they affect patient care.

Finance is another sector where XAI is gaining serious traction. When an AI model decides whether to approve or deny a loan, customers and regulators want to know the reasoning behind that decision. XAI helps banks provide clear explanations based on factors like credit score, income, and payment history. This transparency not only builds trust but also helps financial institutions comply with regulations and avoid discriminatory practices.

In the world of hiring and human resources, AI tools are increasingly used to screen candidates. But without explainability, these systems risk unfair bias or unclear decision-making. XAI allows HR teams to understand which candidate traits influenced the AI’s recommendations, helping ensure the process is fair and accountable.

Even in areas like criminal justice and law enforcement, where AI is used for risk assessments or predictive policing, explainability plays a critical role. It helps prevent unfair targeting or biased decisions by making it clear how the model reached its conclusions. This is vital for maintaining public trust and upholding ethical standards.

Beyond these examples, XAI is making waves in industries like retail, where it helps personalize customer experiences by explaining recommendations, and in manufacturing, where it assists with quality control by identifying why a defect occurred.

These use cases show that explainable AI is not just a technical luxury, it’s a practical necessity. By making AI decisions transparent and understandable, XAI helps organizations make better decisions, build trust with stakeholders, and use AI responsibly.

Explainable AI is gaining momentum, but it’s still very much a work in progress. As AI continues to become a bigger part of our daily lives, the need for transparency will only grow. So what can we expect in the future of XAI? Let’s dive into some of the key trends, challenges, and opportunities shaping what’s next.

One big trend is the push toward more user-friendly explanations. Right now, many explainability methods are designed for data scientists and AI experts. The future is about making those explanations accessible to everyone, from business leaders to everyday users, so they can understand AI decisions without needing a PhD in machine learning. This means creating visual tools, simple language summaries, and interactive interfaces that demystify AI outputs.

Another trend is integrating XAI directly into AI development tools and platforms. Instead of tacking explainability on as an afterthought, developers are building models with transparency in mind from the start. This “explainability by design” approach promises to make AI systems easier to audit, debug, and improve over time.

But with progress come challenges. One of the biggest is balancing explainability with performance. Sometimes, the most accurate models are the hardest to interpret. Finding ways to simplify explanations without sacrificing quality is a tricky problem researchers are still working to solve. There’s also the challenge of standardizing what counts as a good explanation, since different industries and use cases have different needs.

Privacy is another concern. Explaining AI decisions often means revealing details about the data the model used. Ensuring that explanations don’t expose sensitive or personal information will require smart strategies and safeguards.

On the opportunity side, XAI opens the door to wider adoption of AI across sectors that have been cautious so far. Industries like healthcare, finance, and government are more likely to embrace AI when they can clearly understand and trust it. This means new business models and services built around transparent AI could emerge.

Additionally, as regulators step up AI oversight, explainability will become a key compliance requirement. Organizations that get ahead of this curve will not only avoid legal headaches but gain a competitive advantage by building trust with customers and stakeholders.

In short, the future of explainable AI is full of promise but also complexity. It will take collaboration between researchers, businesses, regulators, and users to navigate this path. But one thing is clear: making AI understandable is no longer optional, it’s essential for AI to truly benefit society.

As explainable AI becomes critical to building trustworthy and ethical machine learning solutions, having the right partner is more important than ever. Softura combines deep expertise in AI, machine learning, and Microsoft technologies with a strong focus on transparency and compliance. We help organizations design and implement XAI solutions that not only deliver powerful insights but also build trust with users and regulators alike.

If you’re ready to move beyond the black box and make AI decisions clear, fair, and accountable, Softura is here to guide you every step of the way. Let’s work together to create AI you and your stakeholders can truly understand and rely on.

👉 Contact Softura today to start your journey toward explainable AI.

Build AI You Can Trust

Leverage Softura’s expertise to design transparent, fair, and future-ready machine learning solutions.